4.4. Infrastructure and Operational Maturity

4.4.1. What “operational maturity” means for an L1

For a Proof-of-Stake L1, “operational maturity” is not just whether blocks are produced. It is the chain’s ability to run predictably and safely under normal load and under stress (upgrades, incidents, validator churn, endpoint outages). In practice, this maturity is visible in:

Critical public infrastructure (RPC/LCD/gRPC/FCD, explorers, indexers, archive access).

Reliability engineering (monitoring, alerting, SLOs/SLAs, status pages).

Release and incident operations (upgrade coordination, reproducible builds, runbooks, post-mortems, crisis communications).

Data availability (historical access, pruning policy transparency, exportability).

This section assesses Terra Classic’s operational maturity from evidence available in the report’s primary corpus.

4.4.2. Public endpoints: availability, redundancy, and the “no SLA” reality

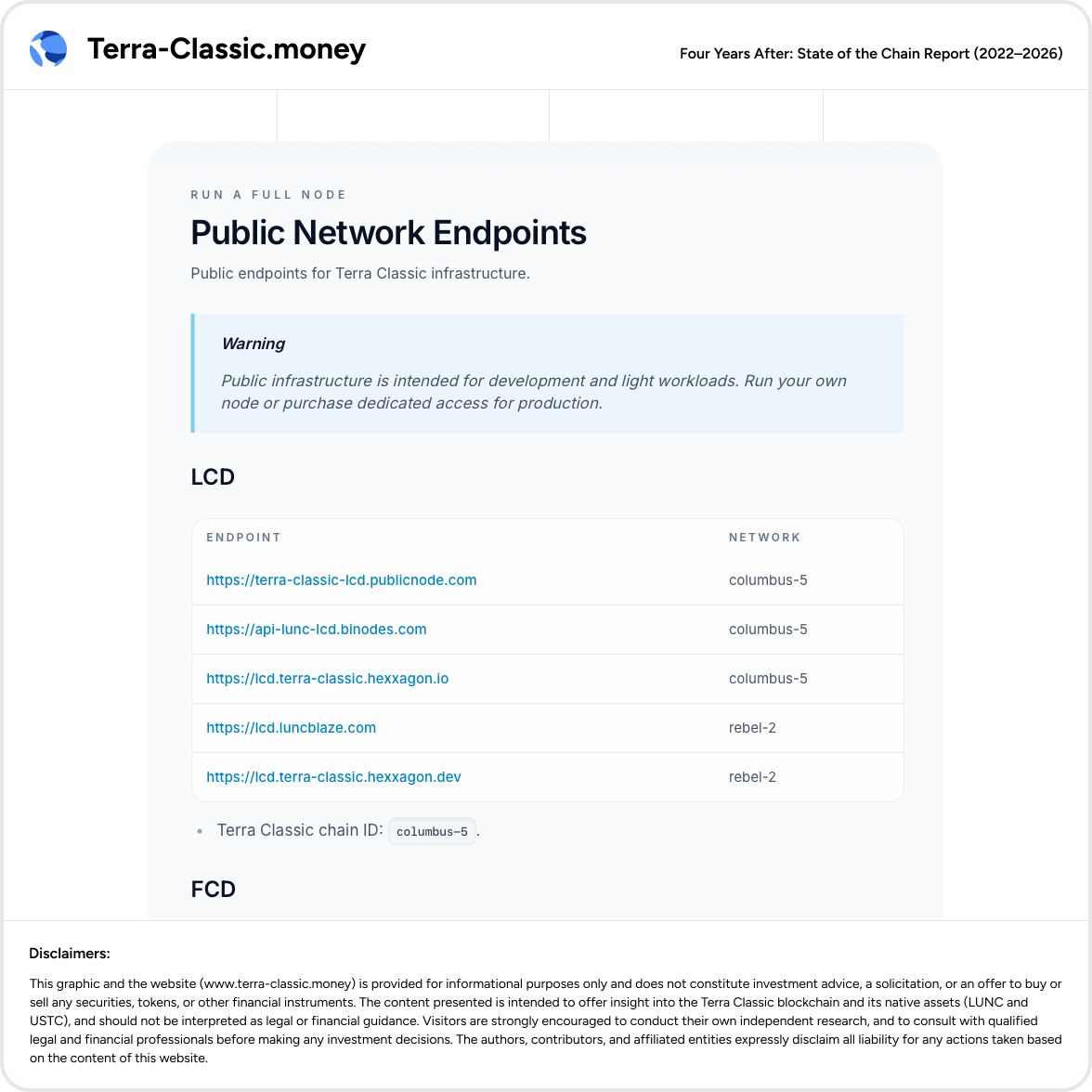

Terra Classic has multiple publicly documented endpoints (LCD, RPC, gRPC, and FCD), operated by third parties such as PublicNode, BiNodes, Hexxagon, and community operators. This is a meaningful positive: developers and wallets have redundant options rather than a single gateway.

However, the documentation explicitly positions these as public infrastructure for development and light workloads, with no SLA, and warns users to “run your own node” or buy dedicated access for production workloads.

Observed endpoint map (illustrative, not exhaustive):

LCD (REST): PublicNode, BiNodes, Hexxagon, LuncBlaze (and others listed in docs).

RPC (consensus/tx broadcast): PublicNode, BiNodes, Hexxagon, LuncBlaze.

gRPC: PublicNode, Hexxagon.

FCD: PublicNode, Hexxagon, LuncBlaze.

Operational implication: Terra Classic’s “public access layer” is functional but non-institutional: it relies on goodwill/volunteer/third-party services and does not provide reliability guarantees.

4.4.3. Endpoint dependency concentration and “effective outages”

Even with multiple endpoints, practical dependency concentration can emerge:

wallets and dashboards converge on the most convenient provider,

rate limits or downtime at a dominant provider can appear as “chain is down,”

pruning policies or delayed indexing can distort user experience and analytics.

In mature ecosystems, this is mitigated by published SLOs and public incident comms. Terra Classic does not have those centralized mechanisms (see 4.4.7).

Operational implication: endpoint failures are a meaningful availability and reputation risk—often misattributed to core chain health.

4.4.4. Indexers, explorers, and “truth surfaces”

Terra Classic has multiple explorers and community dashboards that provide essential visibility for:

blocks and transactions,

validator views,

supply/burn data,

basic performance indicators.

This is a positive: the ecosystem is not dependent on a single explorer.

But operational maturity depends on the quality and standardization of these “truth surfaces”:

Many tools are third-party operated, with different assumptions and refresh behavior.

Some provide APIs; others provide only visual outputs.

There is no canonical “official analytics surface” that defines standard metrics, definitions, and exportability.

Operational implication: visibility exists, but it is tool-fragmented; independent verification is possible but more expensive than in foundation-grade ecosystems.

4.4.5. Data retention, pruning, and historical reproducibility

A critical operational maturity dimension is whether the chain’s public interfaces enable reproducible analysis across time.

The corpus records that RPC queries for older heights can return pruned errors (example indicates a lowest retained height), limiting historical analysis using public RPC infrastructure.

This matters because it:

constrains accountability analysis (historical incidents, long-run performance),

increases the cost of long-horizon due diligence,

pushes “history” into private archives or disappears from public interfaces.

Current state: the chain can be stable today while still being hard to audit historically without specialized archival nodes.

4.4.6. Upgrade operations as recurring operational stress tests

For PoS chains, major upgrades are recurring stress tests because they require:

coordination across validators (time zones, readiness),

synchronized binaries and dependencies,

coordination with exchanges and integrators (lead time constraints).

Validator chat evidence reflects operational constraints typical of non-institutional release management:

time zone coordination issues,

dependency/version discussions,

acknowledgment that major exchanges impose operational scheduling constraints (“Binance is the minimum”).

Separately, the Chapter 4 evidence shows Terra Classic has used scheduled halts for upgrades, making downtime more predictable than unplanned halts (but still an operational event).

Operational implication: Terra Classic can execute upgrades, but its release operations remain human-heavy, not institutionally standardized.

4.4.7. Incident response and operational transparency (explicit current-state finding)

Terra Classic does not have:

a documented incident response plan,

a canonical public status page,

or a standardized incident history surface.

As a result, users and integrators infer operational state through third-party dashboards, explorers, and social channels. This increases confusion during partial outages (endpoint/indexer failures) and raises the reputational cost of incidents—even when the core chain remains live.

Operational implication: absence of centralized operational transparency is itself a maturity gap, because it slows coordinated response and increases information asymmetry.

4.4.8. Maturity assessment (current-state conclusion)

Terra Classic operational reality:

The chain is running and developers can integrate using multiple public endpoints.

Public infrastructure is provided by multiple third parties, explicitly without SLA and intended for light workloads.

There is no canonical operational transparency surface (status page, incident response plan, standardized incident log). (explicit finding from report process)

Historical reproducibility is impaired by pruning constraints on public endpoints.

Upgrades remain the main operational risk moments due to coordination and dependency alignment constraints.

Interpretation: Terra Classic is operationally functional but not institutionally mature. The infrastructure posture resembles a community-operated chain more than a foundation-grade L1 with reliability engineering and transparency norms.

4.4.9. Key takeaways for investors

Access-layer reliability is best-effort: public endpoints are explicitly intended for development/light use; production-grade availability requires private infrastructure or dedicated providers.

Operational transparency is structurally weak: no status page or incident response publication exists, increasing confusion and reputational damage during disruptions.

Independent verification is expensive: pruning and fragmented tooling raise the cost of accountability and due diligence.

Upgrade days remain the highest operational risk windows: coordination plus exchange lead times are explicit constraints in Terra Classic operations.